🗞 This Week in News

Mapping the “Mind” of an LLM - XAI work from Anthropic identified how millions of concepts are represented inside Claude Sonnet. This is the first ever detailed look inside a modern, production-grade LLM. (See below for a more detailed look at this research).

Stanford releases report on The Foundation Model Transparency Index after 6 months. They scored 14 companies, where each developer prepared a report disclosing information about their flagship foundation model (e.g. how much energy was used in training the model). The average score is 58/100. They found that developers scored the highest on areas like the capabilities of their model and documentation for downstream deployers. Areas like data access, evaluations of model trustworthiness, and downstream impact are the most opaque.

🥁 Interesting Products & Features

PaliGemma from Google - 3B parameter multimodal model based on SigLIP, a vision model, and Gemma, an LLM (the model is a composition of a Transformer decoder and a Vision Transformer image encoder). With the ability to fine-tune PaliGemma for image and short video caption, visual question answering, text reading, object detection, and object segmentation and its availability for commercial use, we will likely see a lot of custom multimodal models built for specific applications. Blog with more resources.

Aya multilingual model from Cohere - open-source dataset, models, and annotations featuring over 100 languages and a community of 3k independent researchers promises to advance multilingual AI research.

Mistral fine-tune codebase - a light-weight codebase that enables memory-efficient and performant fine-tuning of Mistral's models using LoRA.

📄 Interesting Papers

SirLLM: Streaming Infinite Retentive LLM - Allows LLMs to maintain longer memory during infinite-length dialogues without the need for fine-tuning. SirLLM utilizes a Token Entropy metric and a memory decay mechanism to filter key phrases, endowing LLMs with both long-lasting and flexible memory. Authors from Shanghai Jiao Tong University. GitHub.

RectifID: Personalizing Rectified Flow with Anchored Classifier Guidance - Customizing diffusion models to generate identity-preserving images from user-provided reference images is extremely challenging. This paper utilizes classifier guidance, a training-free technique that steers diffusion models using an existing classifier, for personalized image generation and they show advantageous personalization results for human faces, live subjects, and certain objects. Authors from Peking University. GitHub.

Large Language Models Reflect Human Citation Patterns with a Heightened Citation Bias - While LLMs can aid in citation generation, they may also amplify existing biases and introduce new ones, potentially skewing scientific knowledge dissemination. This paper reveals a remarkable similarity between human and LLM citation patterns, but with a more pronounced high citation bias in GPT-4, which persists even after controlling for publication year, title length, number of authors, and venue. Authors from Vrije Universiteit Brussel.

Understanding the differences in Foundation Models: Attention, State Space Models, and Recurrent Neural Networks - a great resource for understanding differences between attention-based models, state space models, and RNNs. This paper introduces the Dynamical Systems Framework (DSF), which allows a principled investigation of all these architectures in a common representation and facilitates rigorous comparisons, providing new insights on the distinctive characteristics of each model class. Authors from ETH Zurich.

Mapping the “Mind” of an LLM: Extracting Interpretable Features from a production-grade LLM

This past week, the Anthropic Mechanistic Interpretability team published exciting results on extracting interpretable features from a production-grade LLM (Claude 3 Sonnet). This builds on previous work from the team. In October, the Anthropic team published a paper demonstrating that a weak dictionary learning algorithm called a sparse autoencoder could be used to generate learned features from a trained model that offer a more monosemantic unit of analysis than the model's neurons themselves. In short: sparse autoencoders produce interpretable features for LLMs.

Some highlights from the paper:

The resulting features are highly abstract: multilingual, multimodal, and generalizing between concrete and abstract references.

These features can be used to influence the behavior of LLMs. See: Golden Gate Bridge Claude, where Claude is convinced they are the Golden Gate Bridge.

They found features related to a broad range of safety concerns, including deception, sycophancy, bias, and dangerous content.

Resources:

Terminology:

Mechanistic interpretability seeks to understand neural networks by breaking them into components that are more easily understood than the whole. By understanding the function of each component, and how they interact, we hope to be able to reason about the behavior of the entire network. [Source]

Sparse Dictionary Learning aims at finding a sparse representation of the input data in the form of a linear combination of basic elements as well as those basic elements themselves. These elements are called atoms and they compose a dictionary. [Source]

Autoencoder is a type of neural network that tries to re-create the input after compressing it down into an “information bottleneck” layer. Essentially, an autoencoder compresses an input into a significantly smaller representation and then attempts to recreate it as closely as possible.

Sparse Autoencoder is a type of autoencoder that employs sparsity to achieve an information bottleneck. It seeks to decompose data into a weighted sum of sparsely active components. Learn more about their implementation here.

Monosemanticity is a neural network design in which each neuron is dedicated to a single, specific concept (there is one-to-one mapping between concepts and neurons). This could enable more interpretable ML systems.

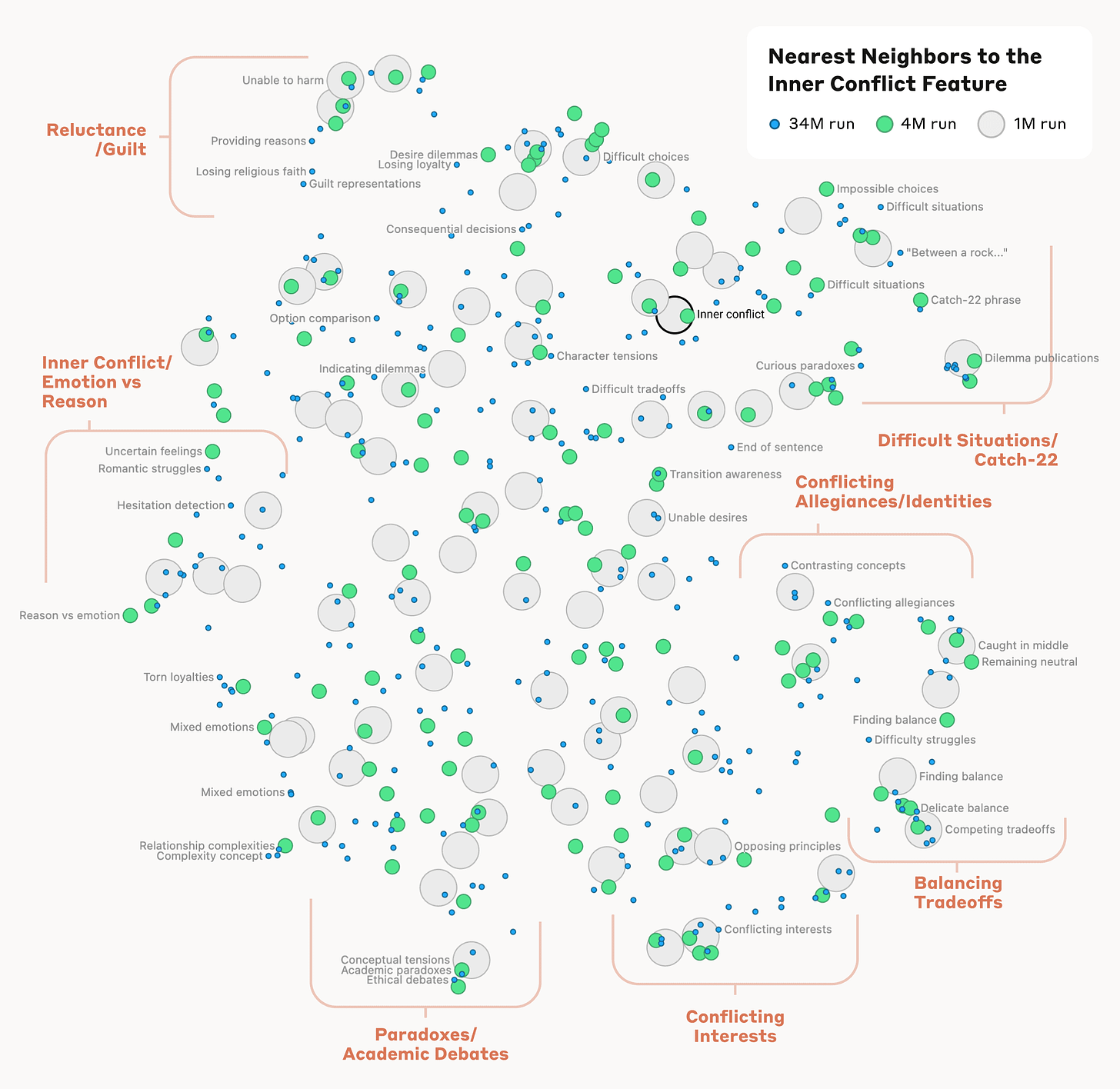

A map of the features near an "Inner Conflict" feature, including clusters related to balancing tradeoffs, romantic struggles, conflicting allegiances, and catch-22s. IMAGE SOURCE.