🗞 This Week in News

Frontier Safety Framework from Google DeepMind discusses analyzing and evaluating frontier models for risks. They share risk mitigation strategies and share future work in their technical report.

🥁 Interesting Products & Features

Open Release of Grok-1 - X released the weights and architecture of Grok-1, a 314 billion parameter Mixture-of-Experts model.

Hermes-2 Θ is a merged and then further RLHF'ed version of the Hermes 2 Pro model and Meta's Llama-3 Instruct model.

📄 Interesting Papers

LoRA Learns Less and Forgets Less - This paper shows that LoRA substantially underperforms full finetuning but it exhibits a desirable form of regularization: it better maintains the base model's performance on tasks outside the target domain (it hallucinates less). Authors from Columbia.

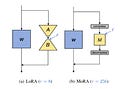

MoRA: High-Rank Updating for Parameter-Efficient Fine-Tuning - This paper finds that LoRA’s low-rank updating mechanism may limit the ability of LLMs to effectively learn and memorize new knowledge. The authors propose a new method called MoRA, which employs a square matrix to achieve high-rank updating while maintaining the same number of trainable parameters. MoRA outperforms LoRA on memory-intensive tasks and achieves comparable performance on other tasks. Authors from Beihang University.

Self-Rectifying Diffusion Sampling with Perturbed-Attention Guidance - The PAG method proposed in this paper improves image quality without requiring further training. PAG is designed to progressively enhance the structure of synthesized samples throughout the denoising process by considering the self-attention mechanisms' ability to capture structural information. Authors from Korea University.

EfficientTrain++: Generalized Curriculum Learning for Efficient Visual Backbone Training - off-the-shelf, easy-to-implement algorithm for the efficient training of foundation visual backbones. Reduces the training time of various popular models (e.g., ResNet, ConvNeXt, DeiT, PVT, Swin, CSWin, and CAFormer) by 1.5−3.0× on ImageNet-1K/22K without sacrificing accuracy. [GitHub] Authors from Tsinghua University.

Gemini 1.5 Technical Report - 195 pages of interesting details, especially around safety and alignment. They highlight real-world use cases, such as Gemini 1.5 collaborating on work-related tasks achieving 26 to 75% time savings across 10 different job categories and when given a grammar manual for Kalamang, a language with fewer than 200 speakers worldwide, the model learns to translate English to Kalamang at a similar level to a person who learned from the same content. Authors from Google DeepMind.

Large Language Model Bias Mitigation from the Perspective of Knowledge Editing - This paper introduces a new bias mitigation benchmark, BiasKE, which assesses debiasing performance by complementary metrics on fairness, specificity, and generalization. They also propose a novel debiasing method, Fairness Stamp (FAST), which enables editable fairness through fine-grained calibration on individual biased knowledge. Authors from Zhejiang University.

Matching domain experts by training from scratch on domain knowledge - The authors trained a relatively small 124M-parameter GPT-2 model on 1.3 billion tokens of domain-specific knowledge. Small models trained on the neuroscience literature succeeded when they were trained from scratch using a tokenizer specifically trained on neuroscience text or when the neuroscience literature was used to finetune a pretrained GPT-2. Results indicate that expert-level performance may be attained by even small LLMs through domain-specific, auto-regressive training approaches. Authors from University College London.

Towards a fully declarative neuro-symbolic language - The authors argue that neuro-symbolic systems are missing a core property of reasoning systems, declarativeness. The lack of declarativeness is caused by the functional nature of neural predicates inherited from neural networks. They propose and implement a general framework for fully declarative neural predicates. They show that the declarative extension preserves the learning and reasoning capabilities while being able to answer arbitrary queries while only being trained on a single query type. Authors from Delft University of Technology.

🧠 Sources of Inspiration

Implement Llama 3 from scratch [GitHub]